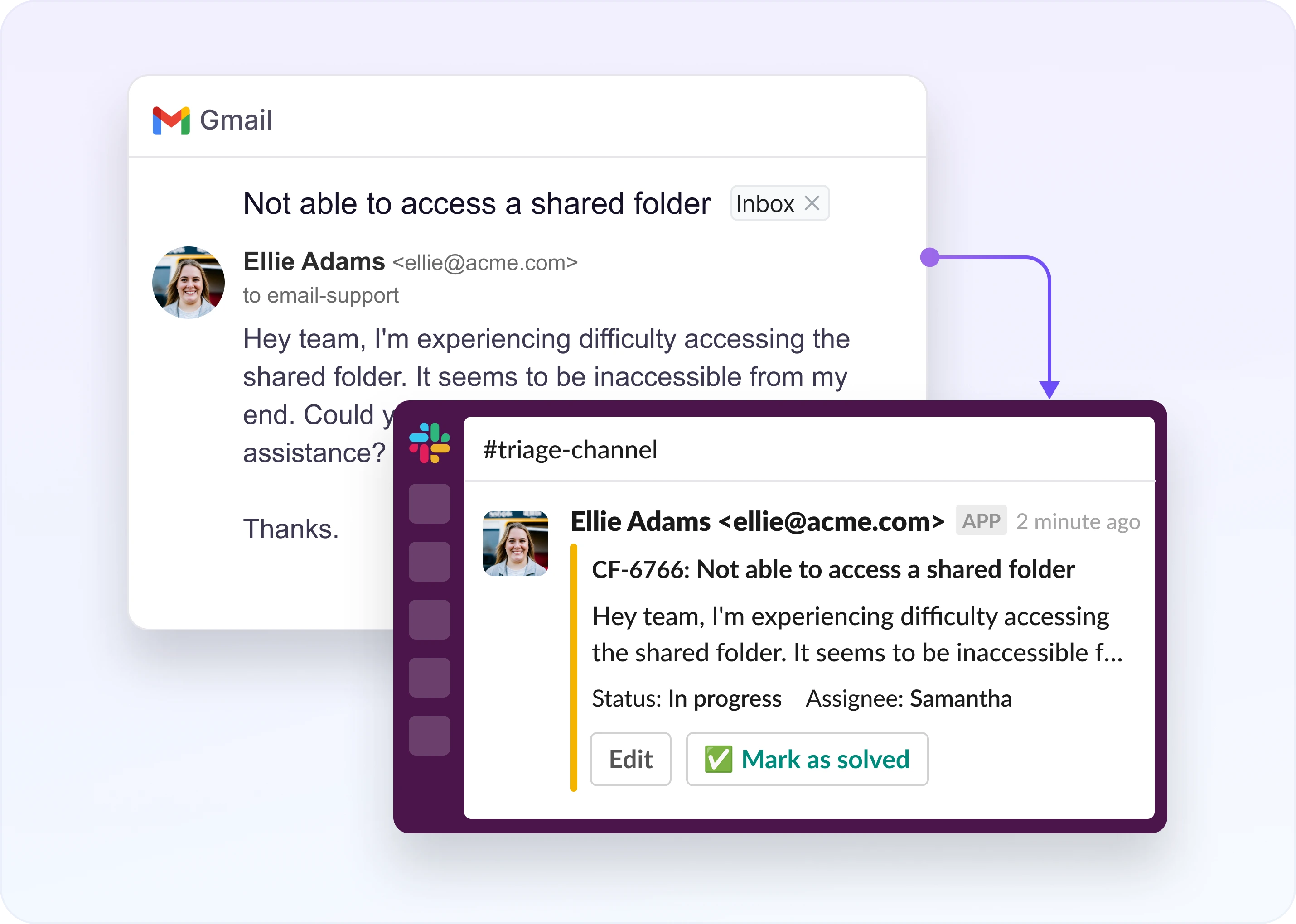

Your Slack is already handling support. Requests come in through DMs, channels, and threads. Someone answers, or doesn't. There's no owner. No record of what got resolved.

You start looking at Slack helpdesk bots.

Every tool promises automation and faster responses. The differences show up when you test them. Some convert messages to tickets. Some automate workflows. A few turn Slack into a real support system.

This guide covers 12 features that matter when support runs in Slack, grouped into four categories: core ticket operations, intelligence and automation, visibility and reporting, experience and deployment. You'll know what to look for (and verify) before choosing a tool your team will depend on.

- Core operations: Ticketing and request management, assignment and routing, SLA and response time tracking

- Intelligence and automation: AI and automation, internal collaboration, custom fields, and data collection

- Visibility and reporting: Integrations, reporting, and analytics, web app interface

- Experience and deployment: Customer experience, security and permissions, implementation and support

TL;DR

A good Slack helpdesk bot should turn Slack conversations into owned, trackable support workflows with routing, SLAs, AI, collaboration, analytics, and security.

The gist

- Core features include ticket capture, automatic thread grouping, split/merge, unified triage, assignment, routing, and SLA tracking.

- Scalable routing should account for workload, availability, ticket type, priority, and customer tier.

- AI needs guardrails: controlled knowledge sources, customer-history restrictions, prompt tuning, and safe customer-facing behavior.

- Strong bots support private internal comments, custom fields, bidirectional integrations, automated reporting, and CSAT tracking.

- Customer experience and security matter too: hide noisy ticket updates, support OOO responses, enforce RBAC, keep audit trails, and meet SOC 2/DPA expectations.

Worth knowing: Test the details during a trial, because “has SLA tracking” is very different from SLA thresholds, pause logic, and tiered alerts that match your real workflow.

1. Ticketing and Request Management

Can a bot distinguish support requests from normal Slack conversations without someone manually sorting through every message? It sounds straightforward, but it breaks down fast.

Most teams start by doing this manually. There's a pinned message telling customers to use a certain command. Or a shared spreadsheet. Or someone who reads every channel and decides what counts as a ticket. We've seen teams run 25+ customer Slack channels this way, pulling data into Google Sheets each week. It holds up for a while.

The volume of messages usually isn't the problem. Nothing is consolidated. A customer sends four follow-ups before anyone responds. That should be one issue, but without automatic thread grouping, it becomes four separate tickets.

Split and merge matters here. Customers often put multiple problems into a single thread. Separating those by hand takes time, and you're doing it when things are already backed up.

Most teams also underestimate the value of triage. When you're keeping track of 15 different channels, the switching itself is overhead. Having one place where all requests appear, with ticket IDs, status, priority, and who's handling it, makes the system workable past the first month.

What To Verify

Can the bot group follow-up messages into a single thread automatically? Can you split and merge tickets after they are created? Is there a unified triage view across all channels?

Capturing a ticket doesn't mean it's handled. Once a request is in the system, someone needs to own it. That handoff is where teams run into a different set of problems.

2. Assignment and Routing

Auto-assigning to the first responder is table stakes. What happens when that person is at capacity, out of the office, or the wrong person for that type of request?

Teams that start with one support person rarely think about routing. One person is the routing. The moment a second person joins, or the first takes a vacation, problems show. Requests land with whoever happens to see them first. High-priority customers wait as long as free-tier users. Bugs go to the same queue as billing questions.

This is where the gap between tools built for demos and tools built for scale becomes obvious.

Any tool will route to an agent. Fewer will route the right type of ticket to the right agent automatically, based on workload rather than round-robin rotation. Availability-aware routing, where OOO agents are automatically skipped rather than manually managed, is something you won't think about until someone's on vacation and the queue backs up at 11 A.M. on a Tuesday.

What To Verify

Does routing consider workload instead of just round-robin? Are OOO agents automatically skipped? Can you set routing rules by ticket type, priority, or customer tier?

Getting the right ticket to the right person is half the equation. Making sure that the person acts before the customer gives up waiting is the other half.

3. SLA and Response Time Tracking

This feature sounds solved in almost every tool. It usually isn't.

We've seen teams with a genuine 5-minute response SLA running on plain Slack with no timer, no alert, no escalation. A message would sit for 12 minutes, sometimes longer, and nobody knew. The person who was supposed to respond had moved on to something else. The customer was waiting. Nobody was watching.

Most tools handle the basics: configuring a first response time and a resolution time. The specifics are where they diverge.

Real SLA tracking differs from a dashboard nobody checks in a few ways. Different thresholds by priority or customer tier. Pause logic when the customer goes quiet. Tiered alerts rather than a single notification that's easy to miss.

That last point deserves more weight than it usually gets. An SLA alert that nobody sees is worse than no alert at all. It gives you a false sense of confidence that you have coverage. Check where alerts go, who receives them, and whether the escalation is tiered. A single notification at the SLA boundary is easy to miss. Tiered escalation (5 minutes out, then 10, then the manager) gets the right people notified before a breach becomes a relationship problem.

What To Verify

Can you set different SLA thresholds by priority or customer tier? Does the timer pause when the customer hasn't responded? Are alerts tiered, or just a single notification at the boundary?

SLA management tells you when something is about to go wrong. Automation aims to prevent things from going wrong in the first place. But it also has the widest gap between what's marketed and what's actually safe to deploy.

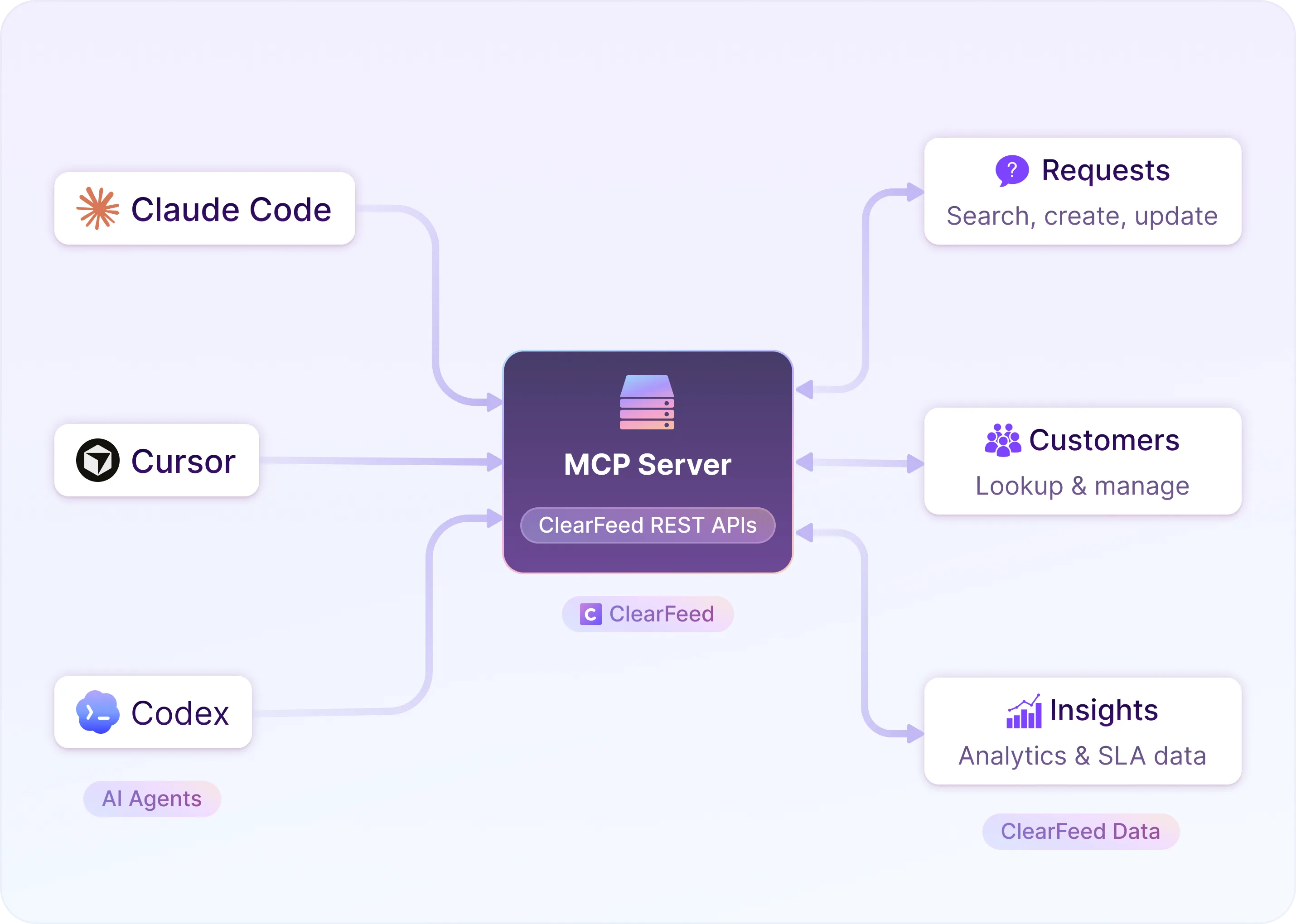

4. AI and Automation

The most important distinction in this category is between AI that's visible only to agents and AI that talks directly to customers.

Agent-facing AI (suggested replies, surfacing related tickets, auto-populated fields) is almost always a net positive. It speeds up the agent without introducing new risk.

Customer-facing AI is a higher bar. We've seen teams set up an AI agent that learned from past conversations, only to realize it was surfacing threads from other customers to new ones. The tool wasn't broken. Nobody thought to control what knowledge sources the AI could access.

Before trusting any customer-facing AI feature, check which knowledge sources it can see. Check whether you can restrict it from pulling in customer conversation history. Check whether you control its persona and name. Check whether the custom prompt configuration lets you tune how it handles edge cases or topics it shouldn't touch. These settings determine whether the AI helps or creates its own support incident.

Look at automation more broadly. Scheduled automations (proactive announcements, maintenance updates, follow-up nudges) matter more than people realize. One team we worked with had a single person manually sending product update announcements to hundreds of external Slack channels, one at a time. It was a single point of failure. Nobody had named it as a problem yet.

What To Verify

Can you control which knowledge sources the AI accesses? Can you restrict customer conversation history from being surfaced? Is there a custom prompt configuration? Does the tool support scheduled, segmented announcements?

AI handles what can be automated. Most support tickets eventually need a human, usually someone who isn't on the support team.

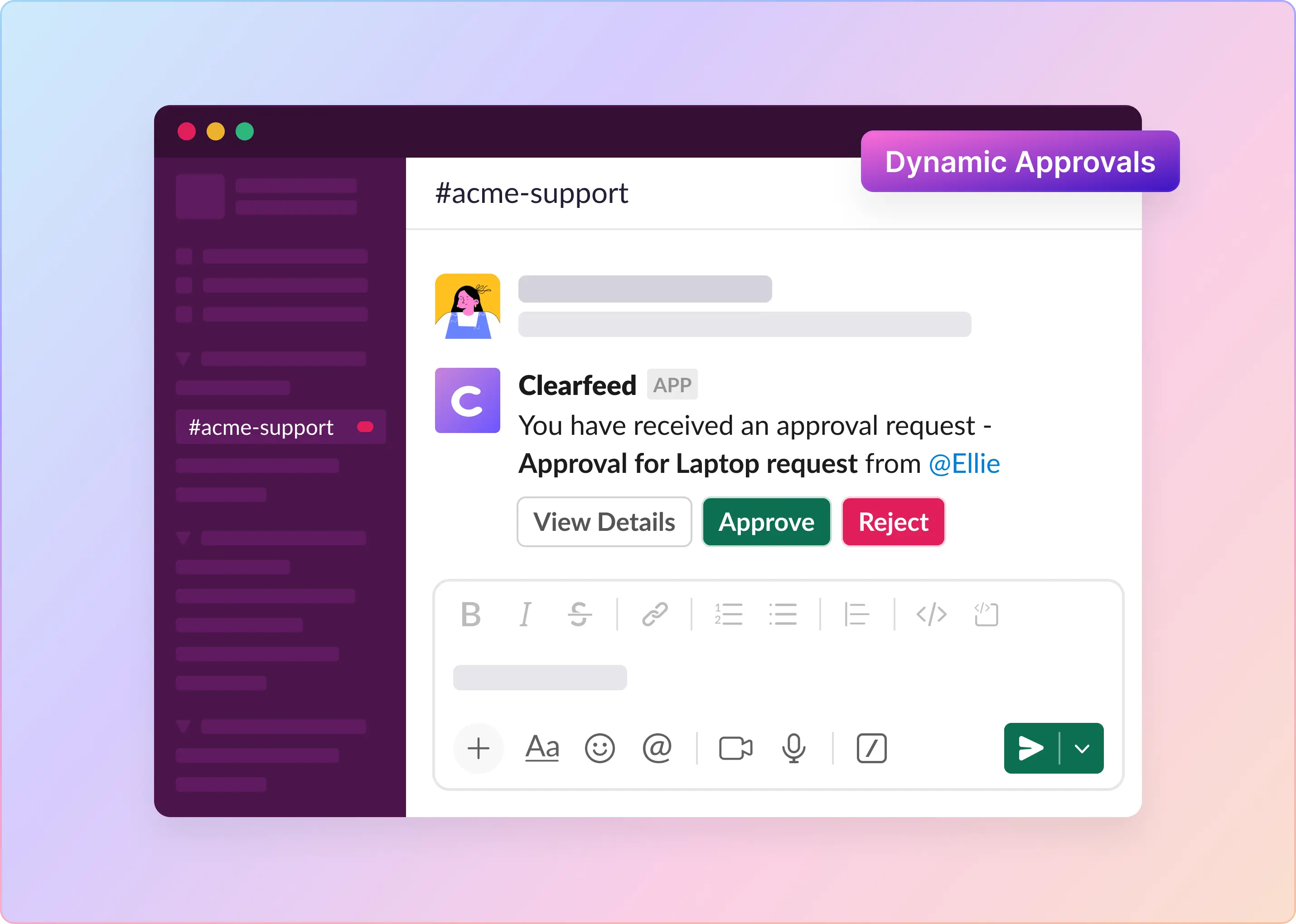

5. Internal Collaboration

Support tickets regularly need input from people outside support: an engineer for a bug, an account manager for context on a tricky customer, a product owner for a feature request. Whether that collaboration stays invisible to the customer matters.

Private internal comments, the ability to tag teammates without triggering a customer notification, and full internal comment threads per ticket let five people coordinate behind the scenes while the customer sees a clean, professional conversation on their end.

Without them, teams work around the tool. Slack DMs about tickets. Email threads that never make it back into the record. Institutional knowledge that lives in someone's head until they leave.

The operational cost of that workaround is hard to see until a ticket goes sideways and nobody can reconstruct what happened.

What To Verify

Can agents leave private comments invisible to the customer? Can you tag teammates without triggering a customer notification? Is there a full internal comment thread per ticket?

Collaboration works when the right people find the right ticket. Finding the right ticket requires structure.

6. Custom Fields and Data Collection

Generic intake works fine for simple support. Once you have different customer segments, multiple product lines, or tiered SLAs, you need to capture structured data at intake. And some of it should stay agent-only.

The ability to create fields is the minimum. Visibility control (agent-only vs. customer-facing) matters. Filtering and searching by field value matters. Webhook or export support for pushing field data to external systems as tickets are created or updated matters. A field that exists but can't be filtered on isn't a reporting field. It's a note.

AI extraction is worth checking separately. If the bot can automatically extract structured field values from freeform customer messages (order numbers, account IDs, product area), that reduces manual data entry at scale. It's the difference between fields being filled in correctly 80% of the time and 40% of the time.

What To Verify

Can you control field visibility (agent-only vs. customer-facing)? Can you filter and search by custom field values? Does the bot support AI extraction of structured data from freeform messages?

The data you collect internally is only useful if it connects to the systems your wider team already lives in.

7. Integrations

The list of supported integrations matters less than how they work.

Most tools will check the boxes for Jira, Salesforce, and Zendesk. What to ask: Is the sync bidirectional? A Jira ticket that doesn't update when the Slack ticket updates is one of the places to check. Can you map fields between systems? Priority in your helpdesk should map to priority in Jira. Assignee should carry over. If field mapping requires custom engineering on your side, it's an export, not an integration.

Evaluate these integration categories separately:

- Project management (Jira, Linear, Asana) for bugs and feature requests that need to live in an engineering workflow.

- CRM (HubSpot, Salesforce) for customer context alongside support history.

- Documentation (Notion, Confluence, GitHub) as knowledge sources for AI responses.

Each of these is a different problem. Make sure the tools you care about are connected well, not just listed on a features page.

What To Verify

Is the sync bidirectional? Can you map fields between systems without custom engineering? Do the integrations you actually need work well, or just exist?

Integrations keep data moving. Reporting tells you whether any of it is working.

8. Reporting and Analytics

Volume, first response time, resolution time: every tool has these. How the data is segmented is a useful question.

Agent-level data shows capacity and workload balance. Who's handling more than their share? Has that been true for three weeks or three months? Customer-level data surfaces your highest-maintenance accounts and your best candidates for proactive outreach before they become a churn risk. Channel-level data tells you where your support load is concentrated. These cuts answer different questions. Aggregate numbers alone tell you something is wrong, but not where.

Two things make reporting useful in practice: automation and integration. A report that someone has to manually pull each week stops being pulled within two months. CSAT data that feeds into the same dashboard as volume and response time is more useful than CSAT data that lives in a separate place. The relationship between how busy your team is and how satisfied customers are is the thing worth watching.

What To Verify

Can you segment data by agent, customer, and channel? Are reports automated or manual? Does CSAT data live alongside volume and response time metrics?

Reports tell you what happened. The web app is where you deal with what's happening right now.

9. Customer Experience

The best Slack helpdesk is one that customers barely notice. They send a message. They get a response. It feels like talking to a person.

Visible ticket IDs, status-change notifications, bot language that reads like a machine: all of these introduce friction the customer didn't sign up for. Most of it is avoidable. Most of it is configurable.

Check whether you can control ticket visibility (whether customers see status messages at all). Check whether CSAT surveys are sent automatically upon closure. Check whether OOO auto-responses are available for after-hours coverage.

Multi-channel support (email, chat widget, support portal) matters if not all your customers reach you via Slack. Assuming they do is a reasonable starting point. Assuming it'll stay true as the customer base grows is less reasonable.

What To Verify

Can you hide ticket IDs and status updates from customers? Are CSAT surveys automatic? Does the bot support OOO auto-responses? Is there multi-channel support beyond Slack?

A good customer experience is the goal. For B2B teams especially, what customers can't see matters as much as what they can. Who has access to what. What gets logged.

10. Security and Permissions

For B2B teams, this section deserves more than a checkbox pass.

Role-based access control and confidential triage channels (not everyone in the workspace needs to see every customer conversation) are the operational basics. SOC 2 compliance and DPA support are the basics of procurement. Full audit trail and Slack group-based team assignment round out the requirements for most enterprise buyers.

Whether the tool's security model can match your actual organizational structure is worth asking. Permissions that can only be set at the team level may not be granular enough for a company with multiple products, regions, or customer tiers. The further downstream you discover that gap, the more expensive it is to work around.

What To Verify

Does RBAC support your actual org structure, not just team-level permissions? Are there confidential triage channels? Is there SOC 2 compliance and DPA support? Does the audit trail cover permission changes, not just ticket activity?

Getting the security model right is table stakes. Getting the whole system running and your team using it correctly is harder. And it's what most teams underestimate during evaluation.

Choose the Helpdesk Features Your Team Needs

Not every feature here matters for every team. A five-person startup doesn't need workload-based routing. A 50-channel enterprise account probably does.

Evaluating the specifics matters for almost everyone. "Has SLA tracking" and "has SLA tracking with pause logic, tier-based thresholds, and tiered alerts" are very different things. The demo shows you that the feature exists. The trial shows you whether it works for your situation.

Teams that replace their helpdesk tool 18 months after buying it usually skipped that part.